On-premise AI agents: a future foundation for education, academia, and industry

Abstract

The rapid advancement of artificial intelligence (AI) has fundamentally transformed digital workflows, and the emergence of AI agents is revolutionizing how we learn, conduct research, and drive productivity. However, reliance on cloud-based AI infrastructure introduces critical challenges, including unstable network connectivity, data security risks, and high operational costs. In response, on-premise AI agents are gaining prominence as a secure, reliable, and cost-effective alternative. This review explores the rise of on-premise AI agents, analyzes the inherent limitations and risks of cloud-dependent systems, and highlights applications of locally deployed AI agents in educational, academic, and industrial settings.

Keywords

INTRODUCTION

Artificial intelligence (AI) has been developing for decades. In particular, the rapid advancement of machine learning (ML) algorithms, together with enhanced computing power, drew worldwide attention to AI after the emergence of AlphaGo in 2016[1], further accelerating the field’s development. Subsequently, ML has been adopted in various scientific disciplines, including chemistry[2-4], biology[5,6], and materials science[7-9], among others, to facilitate scientific discovery. In 2017, the landmark paper “Attention Is All You Need” proposed the transformer architecture[10], which further accelerated the development of large language models (LLMs)[11]. Compared with traditional AI algorithms and models, LLMs show improvements in understanding and generating human language. However, text-only input and output paradigms cannot fully meet the requirements of broader applications[11,12]. Therefore, AI agents based on LLMs have emerged as a new research hotspot, driving transformation across multiple sectors of society.

Agentic AI refers to a paradigm and architectural approach in which AI systems exhibit strong agency, including the ability to autonomously set or pursue goals, reason, plan multi-step actions, adapt to changes, and interact with tools/environments with minimal human supervision. This often involves orchestrating multiple AI agents (or a single sophisticated agent) to handle complex and open-ended workflows. AI agents, in turn, are concrete implementations of such systems: autonomous entities that perceive their environment, reason about tasks, plan action sequences, execute actions (often via tools), and continuously learn or adapt over time[13]. The key characteristics of AI agents include autonomy, reasoning and planning, perception, action, and learning and adaptation. Although early-stage (pre-LLM) AI agents shared limitations such as weak self-learning, limited generative reasoning, and poor adaptability to unstructured or evolving environments, they were less advanced than fully realized agentic AI systems. However, AI agents in the LLM era have attracted growing interest and have been introduced to education, academia, and industry. The utilization of AI agents can significantly enhance the efficiency of study, research, and product development[4,13,14]. Furthermore, well-labeled and customized databases can further improve the performance of AI agents in these specific applications.

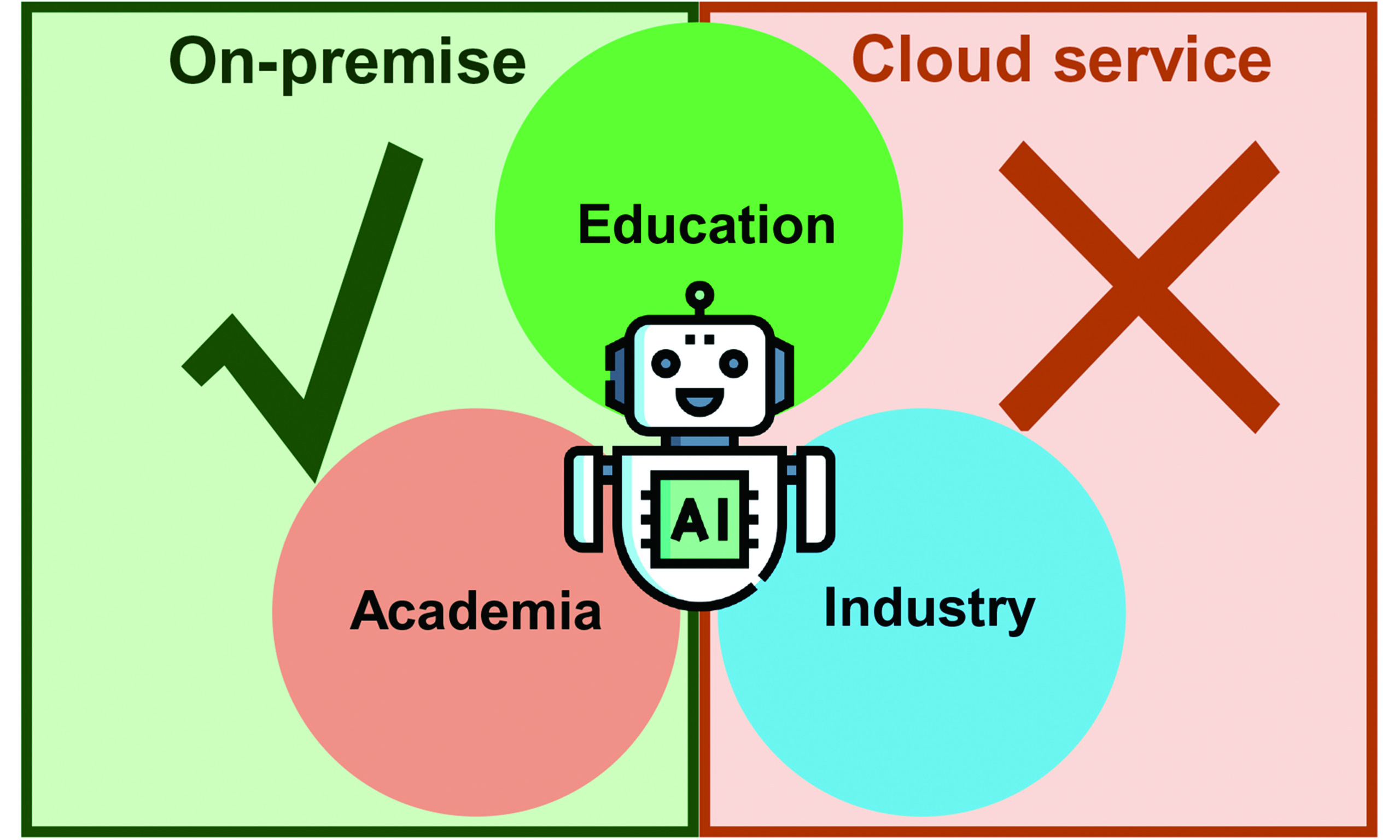

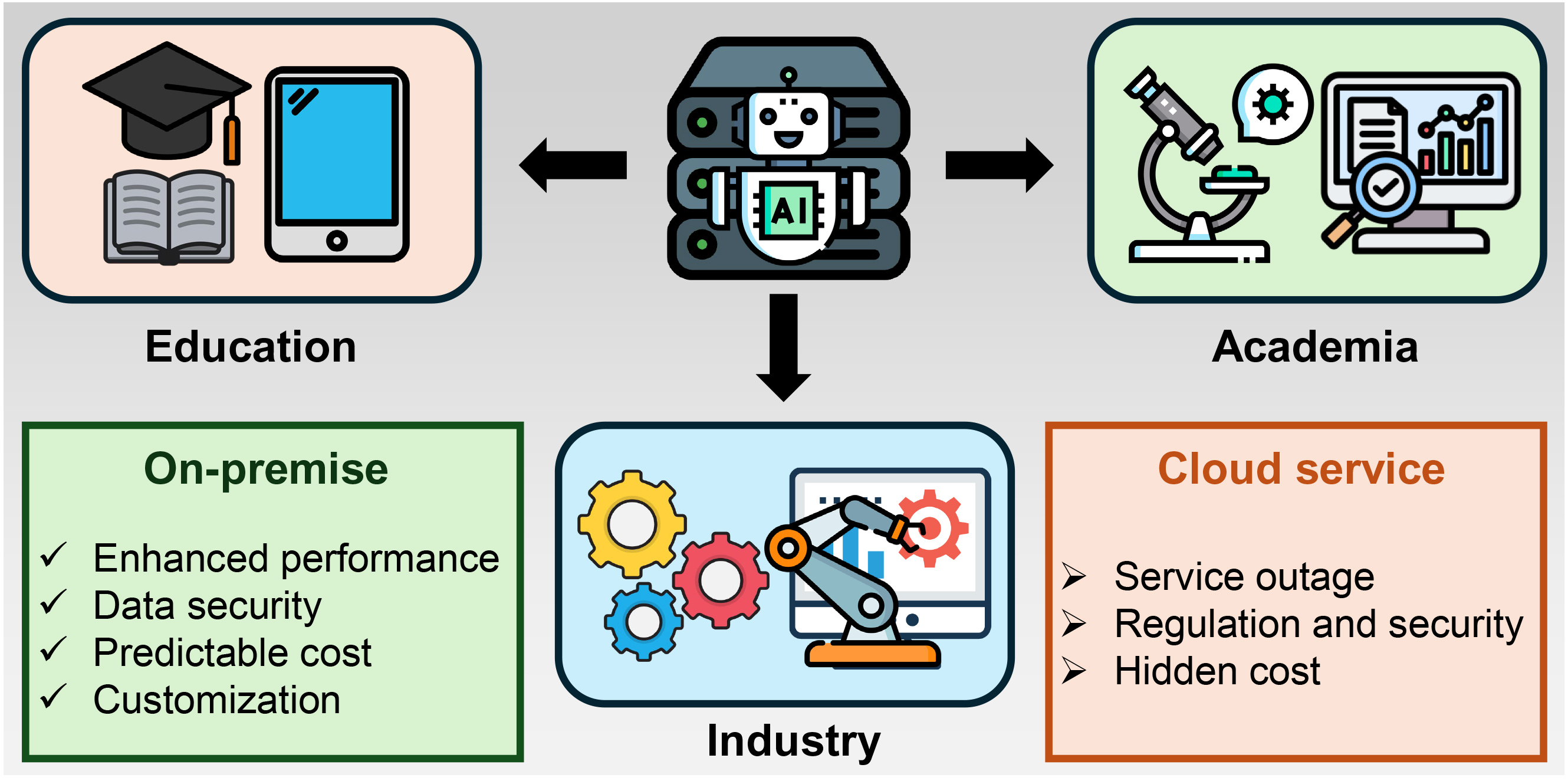

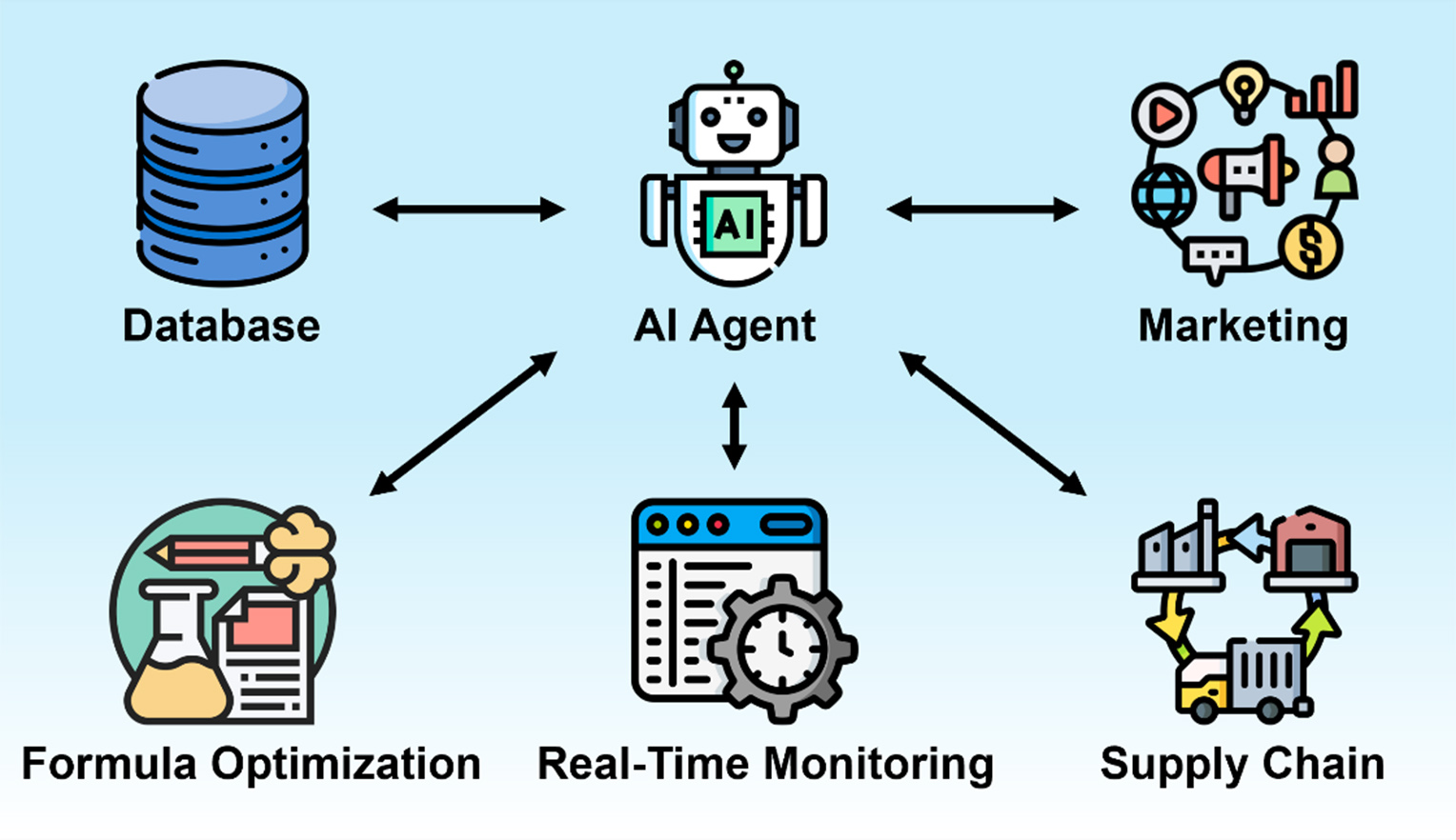

For most daily applications, databases and corresponding AI agents can be deployed through cloud services. However, risks such as service outages, regulatory issues, and hidden costs may disrupt service use and lead to economic loss and broader social consequences. In contrast, because of their high performance, stronger data security, predictable costs, and greater customization potential, on-premise AI agents represent a promising alternative to cloud-based services. In this review, the applications of AI agents in education, research, and industry are summarized, along with the risks associated with cloud-based AI agent deployment and the advantages of on-premise AI agents [Figure 1], indicating the bright future of on-premise AI agents.

APPLICATIONS OF DATABASES WITH AI AGENTS

Applications of AI agents in education

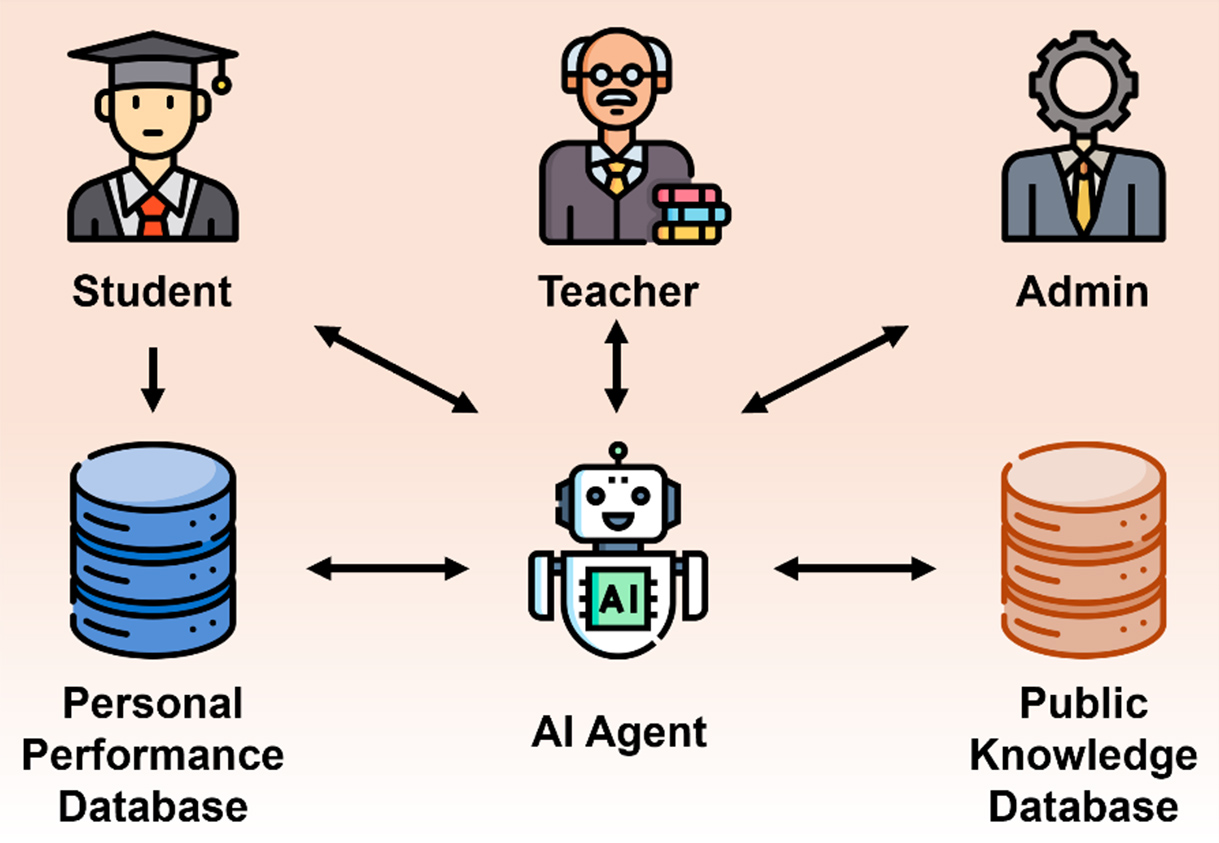

The integration of AI agents with educational databases enables highly personalized and data-driven educational systems [Figure 2][15,16]. The core benefit lies in the AI agent’s ability to use the database as a long-term memory store for individual student performance data, such as scores, pacing, and engagement metrics, to generate real-time cognitive models[15,17]. The agent employs these models to dynamically adjust the difficulty, content, and sequence of learning modules, and provide immediate, granular feedback tailored to students’ specific misconceptions, thereby moving beyond static and delayed human review. This adaptive mechanism ensures that each student follows an optimal learning path, maximizing comprehension while minimizing cognitive load.

Furthermore, AI agents significantly boost operational efficiency for educators and institutions[15,18]. They achieve this by automating administrative workloads and managing repetitive tasks such as scheduling, resource allocation, and initial query handling, thereby freeing instructors to focus on mentorship and complex pedagogical tasks. Importantly, this integration also enables predictive analytics for early intervention. By applying ML models to longitudinal database records (attendance, past failures, socio-economic factors), AI agents can identify students at risk of academic failure with high accuracy[19]. This capability allows for proactive and targeted interventions before failure occurs, fundamentally improving retention rates.

Finally, the AI-database coupling facilitates continuous curriculum improvement[20]. AI agents analyze aggregated performance data to pinpoint specific knowledge gaps or curriculum components that consistently cause widespread student difficulties. These data-driven insights inform educators and administrators in empirically refining and optimizing teaching strategies and course content, driving iterative pedagogical advancements. Overall, this integrated model moves education from assessment of learning to assessment for learning, positioning AI agents and databases as essential components of future educational research and practical deployment.

Applications of AI agents in research

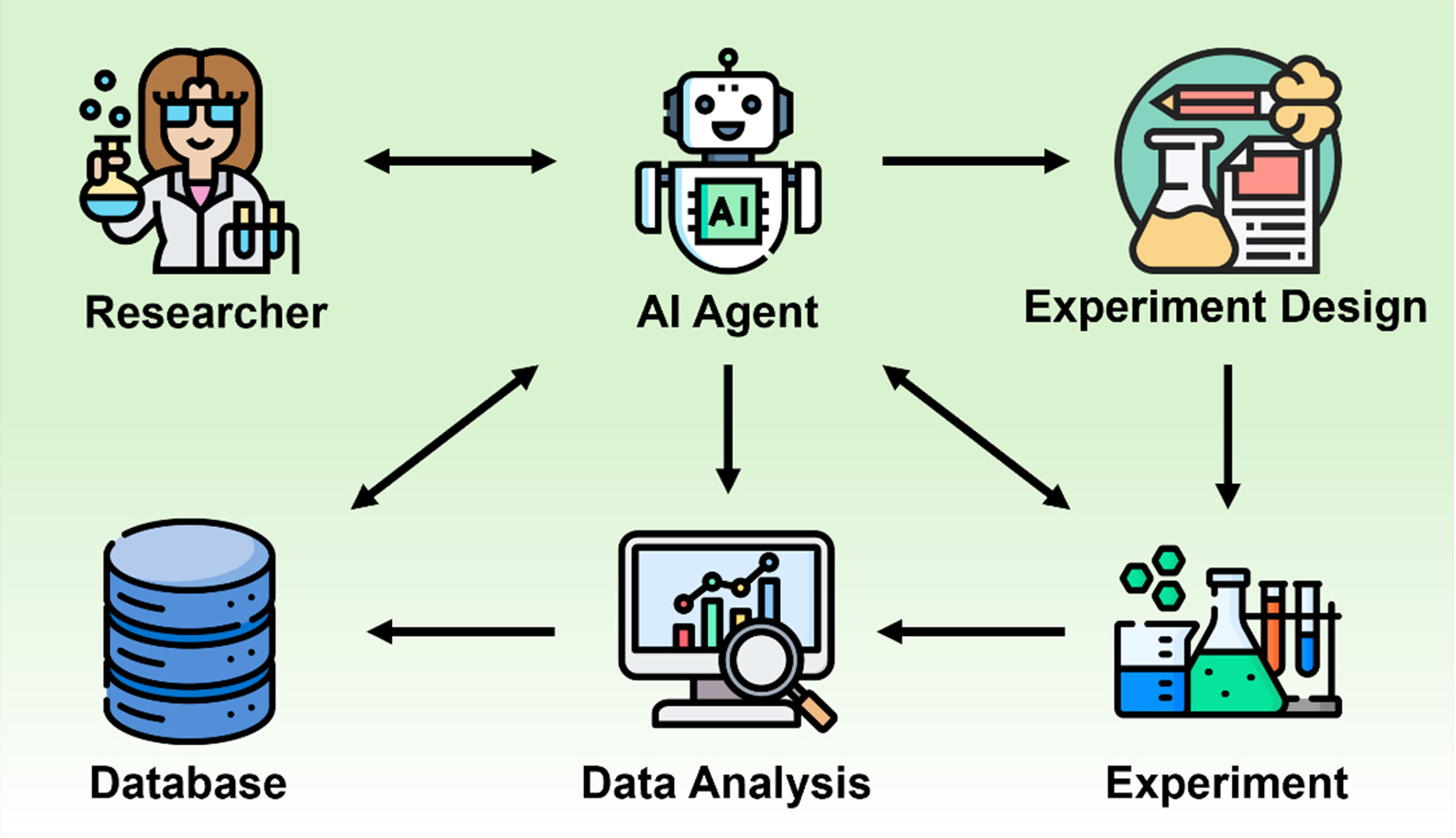

AI agents are designed to set goals, plan multi-step workflows, and execute complex tasks with minimal human intervention. In scientific research, they are fundamentally shifting the paradigm from simple tools to digital collaborators capable of driving discovery cycles at unprecedented speeds.

The primary benefit of AI agents is the dramatic acceleration and augmentation of the research process [Figure 3][21]. Specialized AI agents can function as dedicated lab partners. For instance, they can autonomously query vast scientific databases, extract relevant data, and synthesize findings to identify emerging trends and knowledge gaps[13]. Subsequently, they can analyze complex, multi-omic datasets to propose novel research directions that might be undetectable to human analysis. Furthermore, they can directly interface with lab automation tools, simulating experiments, refining parameters, and learning from outcomes in a continuous iterative cycle. This capability enables researchers, for example, in drug discovery, to progress from target identification to in silico testing within minutes rather than days.

Applications of AI agents in industry

The rise of AI agents represents a pivotal shift in industrial automation, moving beyond fixed robotic process automation toward systems with true autonomy and advanced reasoning capabilities. An AI agent is a goal-driven system that can perceive its environment, formulate multi-step plans, execute actions using various digital tools [such as application programming interfaces (APIs) and databases], and learn from outcomes, all with minimal human oversight[13]. These intelligent systems effectively function as digital knowledge workers, tackling complex, multivariable challenges that were previously too ambiguous for conventional ML models[13,22]. Their core value lies in continuous, adaptive operation, which enhances enterprise continuity and productivity across the board by augmenting human capabilities.

In manufacturing and logistics, AI agents are transforming operational efficiency [Figure 4]. Data administration and analysis are primary capabilities of AI agents in industry. Data accumulated through digitalization can be analyzed by AI agents to optimize formulas for the development and production of new products[23]. In factories, AI agents can continuously monitor real-time sensor data from machinery and instantly adjust flow rates or material consumption to maintain optimal production conditions, leading to significant waste reduction and improved equipment effectiveness[24]. Logistics companies deploy AI agents for supply chain optimization to forecast demand fluctuations and dynamically reroute shipments based on real-time traffic or weather data, reducing transportation costs and improving global delivery efficiency[25].

RISKS OF CLOUD SERVICES

Although cloud services demonstrate several benefits in facilitating applications of AI agents, the inherent risks of relying on third-party cloud providers cannot be avoided. These risks range from data security concerns to the acute danger of widespread service outages.

Widespread service outages

The most immediate risk of cloud service is the fragility of connectivity and dependency on external infrastructure. Even major hyperscalers are not immune to failure. A prominent example occurred on October 20, 2025, when a major Amazon Web Services (AWS) outage originating in the US East 1 region (Northern Virginia)[26] severely impacted a large portion of the internet backbone. The root cause was traced to a rare race condition within an automated Domain Name System (DNS) management system for the Amazon DynamoDB service, which triggered a cascade of infrastructure failures across multiple dependent systems. The effects were immediate and far-reaching: educational services and platforms went offline, corporate research and development (R&D) systems became inaccessible, and critical academic research processes were halted. Major services such as Slack, Snapchat, Fortnite, Reddit, and even parts of Amazon’s retail platform were affected for several hours[27]. Businesses could not process orders, universities faced disruptions in online exams, and remote workers experienced productivity loss. In addition, less than a month later, on November 18, 2025, a major Cloudflare outage took numerous websites and online services offline[28], further underscoring the risks and vulnerabilities associated with reliance on centralized cloud infrastructure.

Regulatory and security risks

Cloud services navigate a complex labyrinth of international data regulations. The “Cloud Act” (Clarifying Lawful Overseas Use of Data Act) in the US and complex data residency rules within the European Union mean that data stored on a server can be subject to the laws of the country in which the physical server is located, regardless of where the data originate[29]. In addition, data containing classified or private information faces a high risk of leakage when stored online, raising significant security and economic concerns.

Hidden costs

Migrating to the cloud is often a one-way process due to vendor lock-in. Switching providers or repatriating data to on-premise infrastructure (egress costs) can be prohibitively expensive and technically challenging. This dependency limits an organization’s bargaining power and operational flexibility. Cloud providers can alter pricing models, terms of service, or hardware availability at their discretion, forcing clients to adapt or face significant disruption[30]. The true costs of data transfer and access can accumulate in opaque ways, making long-term financial planning difficult compared to the fixed costs of controlled on-premise environments[30].

Performance and scalability trade-offs

Cloud-based deployments of LLM-based AI agents provide elastic scalability and rapid provisioning, automatically handling bursty workloads (e.g., peak educational or research demand) with low upfront costs and access to the latest models. However, they introduce network latency that can hinder real-time agentic loops, escalating per-token costs at scale, and reinforcing vendor lock-in. In contrast, on-premise deployments deliver lower and predictable inference latency (critical for time-sensitive perception-reasoning-action cycles in education, research, and industry), full data sovereignty, and improved long-term total cost of ownership (TCO), often breaking even with or surpassing cloud API-based services at ≥ 50 million tokens/month after hardware amortization[30]. Nonetheless, drawbacks include high initial capital expenditure, slower manual scaling, and greater operational overhead. Hybrid models, combining local latency-critical components with cloud burst capacity, are increasingly adopted as a balanced approach.

ADVANTAGES OF ON-PREMISE SOLUTIONS

The benefits of localized data and AI infrastructure are particularly pronounced in environments driven by innovation and strict governance requirements. These advantages extend beyond mere technical specifications to fundamental issues of intellectual property protection, regulatory compliance, and operational resilience.

Enhanced performance

Research often involves processing massive datasets, including genomics, physics simulations, and LLM training, all of which require immense computational power. While cloud computing offers this power, latency introduced by network transmission can be a bottleneck for time-sensitive or interactive AI agent operations[31]. In contrast, on-premise infrastructure allows for direct, high-speed connectivity between storage and computing resources[31]. This proximity eliminates network latency and dependence on external internet connectivity. For R&D teams fine-tuning complex models, immediate data access and rapid iteration cycles can accelerate the discovery process. Furthermore, operational resilience is enhanced, as local systems are insulated from widespread internet outages or cloud provider failures, ensuring continuity of critical research workflows.

Data security and intellectual property protection

The most valuable assets of any academic institution or corporate R&D lab are its data and proprietary algorithms. In cloud environments, despite robust security measures, data are stored on shared infrastructure governed by service agreements that may change and may be subject to differing legal jurisdictions from those of the host nations, thereby introducing substantial risk[32].

An on-premise approach ensures absolute data sovereignty[33]. The organization retains physical and legal control over its data storage and processing units. This is paramount for R&D activities. When developing novel drugs, material science innovations, or confidential AI algorithms, keeping all data within a secure, private perimeter drastically reduces the risk of corporate espionage or unintended data leakage due to third-party breaches. In addition, academic institutions bound by sensitive research contracts with government or industry partners can guarantee compliance with strict security mandates, which often prohibit the use of public cloud infrastructure for certain data types.

Predictable cost

Cloud computing typically follows an operational expenditure model, which can lead to unpredictable “bill shock” when AI training workloads scale unexpectedly. In contrast, an on-premise setup, while requiring higher initial capital expenditure, offers greater long-term financial predictability[30]. The organization owns the infrastructure assets and can amortize their costs over several years. For consistent, high-intensity workloads typical of ongoing research projects, owning infrastructure often results in a lower TCO. This model enables dedicated resource allocation without the risk of escalating usage-based fees, providing a stable foundation for multi-year research initiatives.

Customization for specialized needs

Generic cloud instances are designed to serve a broad market. However, customized AI models are required for specialized needs. For instance, on-premise infrastructure provides complete control over the technology stack[34]. R&D teams can customize hardware to the exact specifications of their AI models and data processing needs[35, 36]. This control also extends to software lifecycles: organizations can manage patch cycles, maintain consistent software versions for experimental reproducibility, and avoid mandatory updates imposed by cloud vendors that might disrupt ongoing critical workflows. This autonomy is vital for meeting the detailed requirements of scientific research and engineering.

CONCLUSION AND OUTLOOK

The shift toward on-premise AI agents represents a fundamental change in how education, academia, and industry adopt AI. By moving away from centralized cloud dependency, organizations can secure full sovereignty over sensitive data, protect student privacy and proprietary intellectual property, reduce latency in real-time workflows, and achieve greater long-term cost predictability.

The rapid maturation of efficient open-source LLMs, combined with increasingly powerful and affordable local hardware, will drive broader adoption in the coming years. We expect a transition from reliance on large, general-purpose cloud-hosted models to highly specialized, on-site fine-tuned AI agents tailored to targeted applications, such as secure R&D analysis, offline personalized tutoring, and closed-loop industrial optimization. While cloud infrastructure will continue to play a role in providing burst scalability and access to cutting-edge foundation models, the long-term future of high-stakes, domain-specific AI agent deployment is increasingly at the edge.

Importantly, on-premise deployments need not conflict with academia’s emphasis on collaboration and open science. Hybrid architectures enable a practical balance: latency-sensitive or privacy-critical agent components operate locally to preserve performance and data control, while non-sensitive outputs, open-source model weights, aggregated/anonymized insights, synthetic data, evaluation benchmarks, model cards, and rich metadata can be selectively shared through secure channels, federated learning protocols, or institutional repositories. By implementing FAIR principles via persistent identifiers (Digital Object Identifiers, DOIs), machine-readable metadata standards, standardized APIs, and reusable licenses (e.g., Creative Commons Attribution, CC BY), these systems support discoverability, accessibility, interoperability, and reuse without requiring full cloud migration. This approach allows institutions to retain the core strengths of on-premise infrastructure while actively participating in and benefiting from the global scientific commons.

Ultimately, on-premise AI agent architecture empowers organizations across education, academia, and industry to fully leverage AI’s transformative potential without sacrificing security, control, or long-term affordability.

DECLARATIONS

Acknowledgments

The author acknowledges the support from Akashic Technology Co., Ltd.

Authors’ contributions

The author contributed solely to the article.

Availability of data and materials

Not applicable.

AI and AI-assisted tools statement

Not applicable.

Financial support and sponsorship

None.

Conflicts of interest

Yin, H. is affiliated with Akashic Technology Co., Ltd., and has declared no conflicts of interest.

Ethical approval and consent to participate

Not applicable.

Consent for publication

Not applicable.

Copyright

© The Author(s) 2026.

REFERENCES

1. Silver, D.; Huang, A.; Maddison, C. J.; et al. Mastering the game of Go with deep neural networks and tree search. Nature 2016, 529, 484-9.

3. Keith, J. A.; Vassilev-Galindo, V.; Cheng, B.; et al. Combining machine learning and computational chemistry for predictive insights into chemical systems. Chem. Rev. 2021, 121, 9816-72.

4. Chen, Y.; Huang, X.; He, Y.; et al. Data-driven strategies for designing multicomponent molten catalysts to accelerate the industrialization of methane pyrolysis. ACS. Catal. 2025, 15, 11003-12.

5. Tarca, A. L.; Carey, V. J.; Chen, X. W.; Romero, R.; Drăghici, S. Machine learning and its applications to biology. PLoS. Comput. Biol. 2007, 3, e116.

6. Zitnik, M.; Nguyen, F.; Wang, B.; Leskovec, J.; Goldenberg, A.; Hoffman, M. M. Machine learning for integrating data in biology and medicine: principles, practice, and opportunities. Inf. Fusion. 2019, 50, 71-91.

7. Yin, H.; Sun, Z.; Wang, Z.; et al. The data-intensive scientific revolution occurring where two-dimensional materials meet machine learning. Cell. Rep. Phys. Sci. 2021, 2, 100482.

8. Wang, Z.; Sun, Z.; Yin, H.; et al. Data-driven materials innovation and applications. Adv. Mater. 2022, 34, e2104113.

9. Zhang, D.; Chen, Y.; Liu, C.; et al. Accelerating catalyst materials discovery with large artificial intelligence models. Angew. Chem. Int. Ed. Engl. 2026, 65, e26150.

10. Vaswani, A.; Shazeer, N.; Parmar, N.; et al. Attention is all you need. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, California, USA, December 4-9, 2017; von Luxburg, U., Guyon, I., Bengio, S., Wallach, H., Fergus, R., Eds.; Curran Associates Inc.: Red Hook, NY, USA, 2017; pp 6000-10. https://dl.acm.org/doi/10.5555/3295222.3295349. (accessed 2026-04-16).

11. Naveed, H.; Khan, A. U.; Qiu, S.; et al. A comprehensive overview of large language models. ACM. Trans. Intell. Syst. Technol. 2025, 16, 1-72.

12. Karanikolas, N.; Manga, E.; Samaridi, N.; Tousidou, E.; Vassilakopoulos, M. Large language models versus natural language understanding and generation. In Proceedings of the 27th Pan-Hellenic Conference on Progress in Computing and Informatics, Lamia, Greece, November 24-26, 2023; Karanikolas, N. N., G., Marinagi, C., Kakarountas, A., Voyiatzis, I., Eds.; Association for Computing Machinery: New York, NY, USA, 2024; pp 278-90.

13. Sapkota, R.; Roumeliotis, K. I.; Karkee, M. AI Agents vs. Agentic AI: a conceptual taxonomy, applications and challenges. Inform. Fusion. 2026, 126, 103599.

14. Wang, S.; Wang, F.; Zhu, Z.; Wang, J.; Tran, T.; Du, Z. Artificial intelligence in education: a systematic literature review. Expert. Syst. Appl. 2024, 252, 124167.

15. Tapalova, O.; Zhiyenbayeva, N. Artificial intelligence in education: AIEd for personalised learning pathways. Electron. J. E-Learn. 2022, 20, 639-53.

16. Zawacki-richter, O.; Marín, V. I.; Bond, M.; Gouverneur, F. Systematic review of research on artificial intelligence applications in higher education - where are the educators? Int. J. Educ. Technol. High. Educ. 2019, 16, 39.

17. Gao, W.; Liu, Q.; Yue, L.; et al. Agent4Edu: generating learner response data by generative agents for intelligent education systems. In Proceedings of the Thirty-Ninth AAAI Conference on Artificial Intelligence and Thirty-Seventh Conference on Innovative Applications of Artificial Intelligence and Fifteenth Symposium on Educational Advances in Artificial Intelligence, February 25-March 4, 2025; Walsh, T., Shah, J., Kolter, Z., Eds.; AAAI Press: Palo Alto, CA, USA, 2025; pp 23923-32.

18. Ma, Q. AI-driven personalized learning systems for enhancing educational outcomes in data-driven environments. In 2025 9th International Symposium on Innovative Approaches in Smart Technologies (ISAS), Gaziantep, Turkiye, June 27-28, 2025; IEEE: New York, NY, USA, 2025; pp 1-4.

19. Wang, Y. Artificial intelligence in student management systems to enhance academic performance monitoring and intervention. Sci. Rep. 2025, 15, 35122.

20. Baker, R. S., Martin, T., Rossi, L. M. Educational data mining and learning analytics. In The Handbook of Cognition and Assessment; Rupp, A. A., Leighton, J. P., Eds.; John Wiley & Sons, Inc., 2016; pp 379-96.

21. Zhang, D.; Jia, X.; Liu, H.; et al. Cloud synthesis: a global closed-loop feedback powered by autonomous AI-driven catalyst design agent. AI. Agent. 2025, 1.

22. Abou Ali, M.; Dornaika, F.; Charafeddine, J. Agentic AI: a comprehensive survey of architectures, applications, and future directions. Artif. Intell. Rev. 2025, 59, 11.

23. Huanbutta, K.; Burapapadh, K.; Kraisit, P.; et al. Artificial intelligence-driven pharmaceutical industry: a paradigm shift in drug discovery, formulation development, manufacturing, quality control, and post-market surveillance. Eur. J. Pharm. Sci. 2024, 203, 106938.

24. Li, C.; Chang, Q.; Fan, H. Multi-agent reinforcement learning for integrated manufacturing system-process control. J. Manuf. Syst. 2024, 76, 585-98.

25. Puvvadi, M.; Arava, S. K.; Raut, A. S.; Santoria, A.; Prasanna Chennupati, S. S.; Vardhan Puvvadi, H. A comprehensive survey of generative AI agents: transforming predictive demand forecasting and supply chain optimization strategies. In 2025 7th International Conference on Intelligent Sustainable Systems (ICISS), India, March 12-14, 2025; IEEE: New York, NY, USA, 2025; pp 546-53.

26. Summary of the Amazon DynamoDB Service Disruption in the Northern Virginia (US-EAST-1) Region. https://aws.amazon.com/message/101925/. (accessed 2026-04-16).

27. Amazon says AWS cloud service back to normal after outage disrupts businesses worldwide. https://www.reuters.com/business/retail-consumer/amazons-cloud-unit-reports-outage-several-websites-down-2025-10-20/. (accessed 2026-04-16).

28. Cloudflare outage on November 18, 2025. https://blog.cloudflare.com/18-november-2025-outage/. (accessed 2026-04-16).

29. CLOUD Act Resources. https://www.justice.gov/criminal/cloud-act-resources. (accessed 2023-10-24).

30. Pan, G.; Chodnekar, V.; Roy, A.; Wang, H. A cost-benefit analysis of on-premise large language model deployment: breaking even with commercial LLM services. In 2025 IEEE International Conference on Big Data (BigData), Macau, China, December 8-11, 2025; IEEE: New York, NY, USA, 2025; pp 2234-9.

31. Munhoz, V.; Bonfils, A.; Castro, M.; Mendizabal, O. A performance comparison of HPC workloads on traditional and cloud-based HPC clusters. In 2023 International Symposium on Computer Architecture and High Performance Computing Workshops (SBAC-PADW), Porto Alegre, Brazil, October 17-20, 2023; IEEE: New York, NY, USA, 2023; pp 108-14.

32. Pan, X.; Zhang, M.; Ji, S.; Yang, M. Privacy Risks of General-Purpose Language Models. In 2020 IEEE Symposium on Security and Privacy (SP), San Francisco, CA, USA, May 18-21, 2020; IEEE: New York, NY, USA, 2020; pp 1314-31.

33. Huang, H.; Li, Y.; Jiang, B.; et al. A Middle Path for On-Premises LLM Deployment: Preserving Privacy Without Sacrificing Model Confidentiality. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, Suzhou, China, November 4-9, 2025; Christodoulopoulos, C., Chakraborty, T., Rose, C., Peng, V., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2025; pp 8332-70.

34. Farzizada, A. Bök, P.-B. Große-Kampmann, M. Evaluating cloud and on-premises hosting solutions: a comparative study for the Azerbaijani market. In Proceedings of the 6th Workshop on Secure IoT, Edge and Cloud systems, Seoul, Republic of Korea, November 10-14, 2025; Association for Computing Machinery: New York, NY, USA, 2025; pp 1-6.

35. Radas, J.; Risse, B.; Vogl, R. Building UniGPT: a customizable on-premise LLM-solution for universities. In Proceedings of EUNIS 2024 annual congress in Athens, Athens, Greece, June 5-7, 2024; , Desnos, L., Desnos, J. F., Bolis, S., Merakos, L., Ferrell, G., Tsili, E., Roumeliotis, M., Eds.; EasyChair: Manchester, UK, 2025; pp 108-16.

Cite This Article

How to Cite

Download Citation

Export Citation File:

Type of Import

Tips on Downloading Citation

Citation Manager File Format

Type of Import

Direct Import: When the Direct Import option is selected (the default state), a dialogue box will give you the option to Save or Open the downloaded citation data. Choosing Open will either launch your citation manager or give you a choice of applications with which to use the metadata. The Save option saves the file locally for later use.

Indirect Import: When the Indirect Import option is selected, the metadata is displayed and may be copied and pasted as needed.

About This Article

Copyright

Data & Comments

Data

Comments

Comments must be written in English. Spam, offensive content, impersonation, and private information will not be permitted. If any comment is reported and identified as inappropriate content by OAE staff, the comment will be removed without notice. If you have any queries or need any help, please contact us at support@oaepublish.com.